Freeman Marketing Services LTD has launched Apps for Artists as a trading name.

Apps for Artists will be for the various apps and software products.

Freeman Marketing Services LTD has launched Apps for Artists as a trading name.

Apps for Artists will be for the various apps and software products.

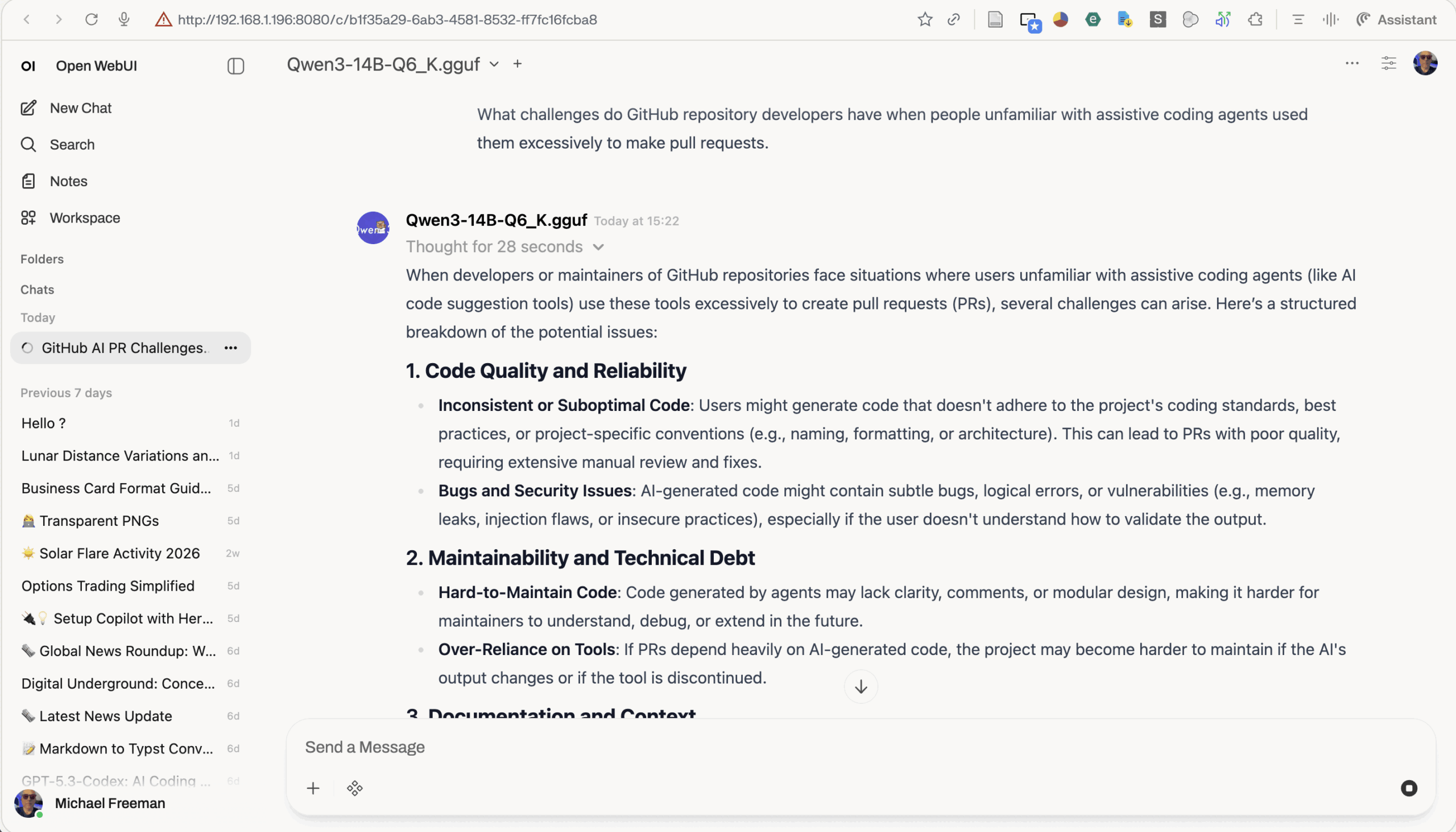

Above is a successful code edit by Aider. You can see the results running in a browser at this link. Until Aider fixed it the theme drop down was non-functional. This is using Qwen3-14B-Q6_K running on Llama.cpp which was modified by me to implement Turbo Quant.

Javascript is easy though ! I’ll add a more in depth example later.

The results are very interesting. So far simple edits are functional. But much more extensive development of larger code bases with a long context may not be here yet. Although that may happen sooner than we think ! Turbo Quant is now being applied to the model itself by various projects ! So 27B models can be shrunk down to fit on a 16gb GPU.

But its not just model size. It’s speed. With a local model on my consumer level GPU thinking is quite slow compared to the big data centre cloud models. This makes sense as those models are often split across multiple GPU’s running in parallel.

So a full production, professional coding environment may not yet be possible. But this testing shows that is coming down the line very fast !

But my approach is pragmatic one. First I needed to verify the viability of a full coding environment. Secondly its determining what can be used locally. Not every useful AI task needs some huge data centre or £10,000 of local GPU’s. So far Qwen3-14B-Q6_K seems a lot more viable as a chat AI with web search capability through Open WebUI. In fact here it is in action …

The AMD AI Bundle is great !

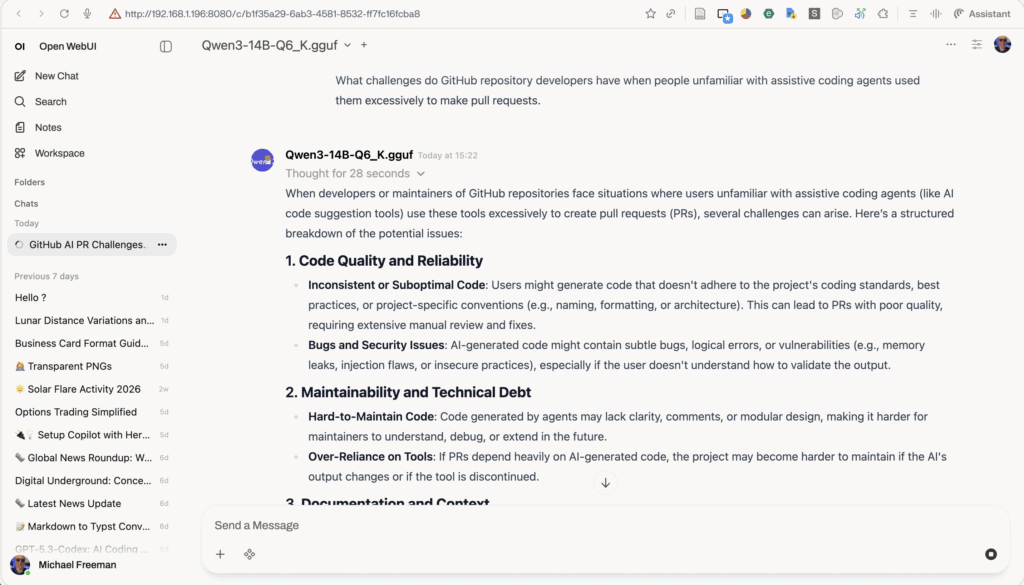

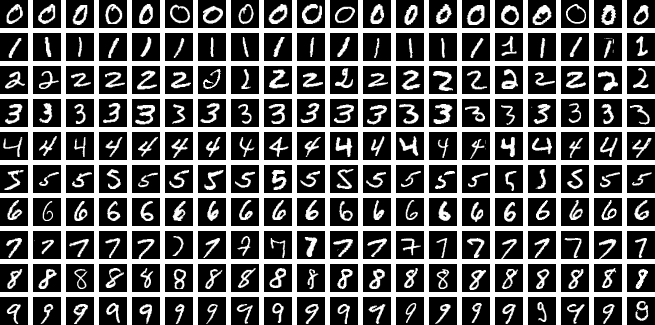

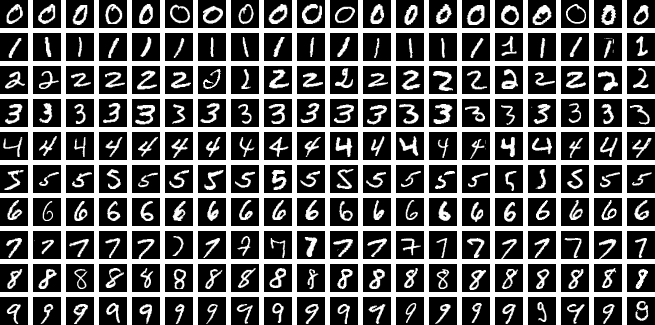

I setup a basic type of visual A.I. training using the MNIST data set. This trains the AI (using my AMD GPU) to recognise hand written numbers.

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

# 1. Setup Device - The bundle maps AMD to 'cuda' for compatibility

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"Training on: {torch.cuda.get_device_name(0)}")

# 2. Simple Neural Network

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.fc1 = nn.Linear(784, 128)

self.fc2 = nn.Linear(128, 10)

def forward(self, x):

x = torch.flatten(x, 1)

x = torch.relu(self.fc1(x))

return self.fc2(x)

# 3. Data & Training Setup

transform = transforms.Compose([transforms.ToTensor()])

train_loader = torch.utils.data.DataLoader(

datasets.MNIST('./data', train=True, download=True, transform=transform), batch_size=64)

model = Net().to(device)

optimizer = optim.SGD(model.parameters(), lr=0.01)

criterion = nn.CrossEntropyLoss()

# 4. Training Loop (Just 1 Epoch for speed)

model.train()

print("Starting training...")

for batch_idx, (data, target) in enumerate(train_loader):

data, target = data.to(device), target.to(device)

optimizer.zero_grad()

output = model(data)

loss = criterion(output, target)

loss.backward()

optimizer.step()

if batch_idx % 100 == 0:

print(f"Batch {batch_idx}/{len(train_loader)} - Loss: {loss.item():.4f}")

print("Training Complete! Your AMD GPU successfully calculated gradients.")This gives …

Training on: AMD Radeon RX 9060 XT

100%|██████████████████████████████████████████████████████████████████████████████████████| 9.91M/9.91M [00:01<00:00, 7.77MB/s]

100%|███████████████████████████████████████████████████████████████████████████████████████| 28.9k/28.9k [00:00<00:00, 313kB/s]

100%|██████████████████████████████████████████████████████████████████████████████████████| 1.65M/1.65M [00:00<00:00, 2.98MB/s]

100%|██████████████████████████████████████████████████████████████████████████████████████████████| 4.54k/4.54k [00:00<?, ?B/s]

Starting training...

Batch 0/938 - Loss: 2.2973

Batch 100/938 - Loss: 2.0803

Batch 200/938 - Loss: 1.8414

Batch 300/938 - Loss: 1.3864

Batch 400/938 - Loss: 1.1284

Batch 500/938 - Loss: 0.9323

Batch 600/938 - Loss: 0.7264

Batch 700/938 - Loss: 0.8299

Batch 800/938 - Loss: 0.7124

Batch 900/938 - Loss: 0.6162

Training Complete! Your AMD GPU successfully calculated gradients.I wrote a custom visualiser to show what the AI can then do with that training. See the video at the top. I even selected some hand written numbers from my photo’s to test.

This kind of training may be used in the Ableton AI assistant product that we are working on.

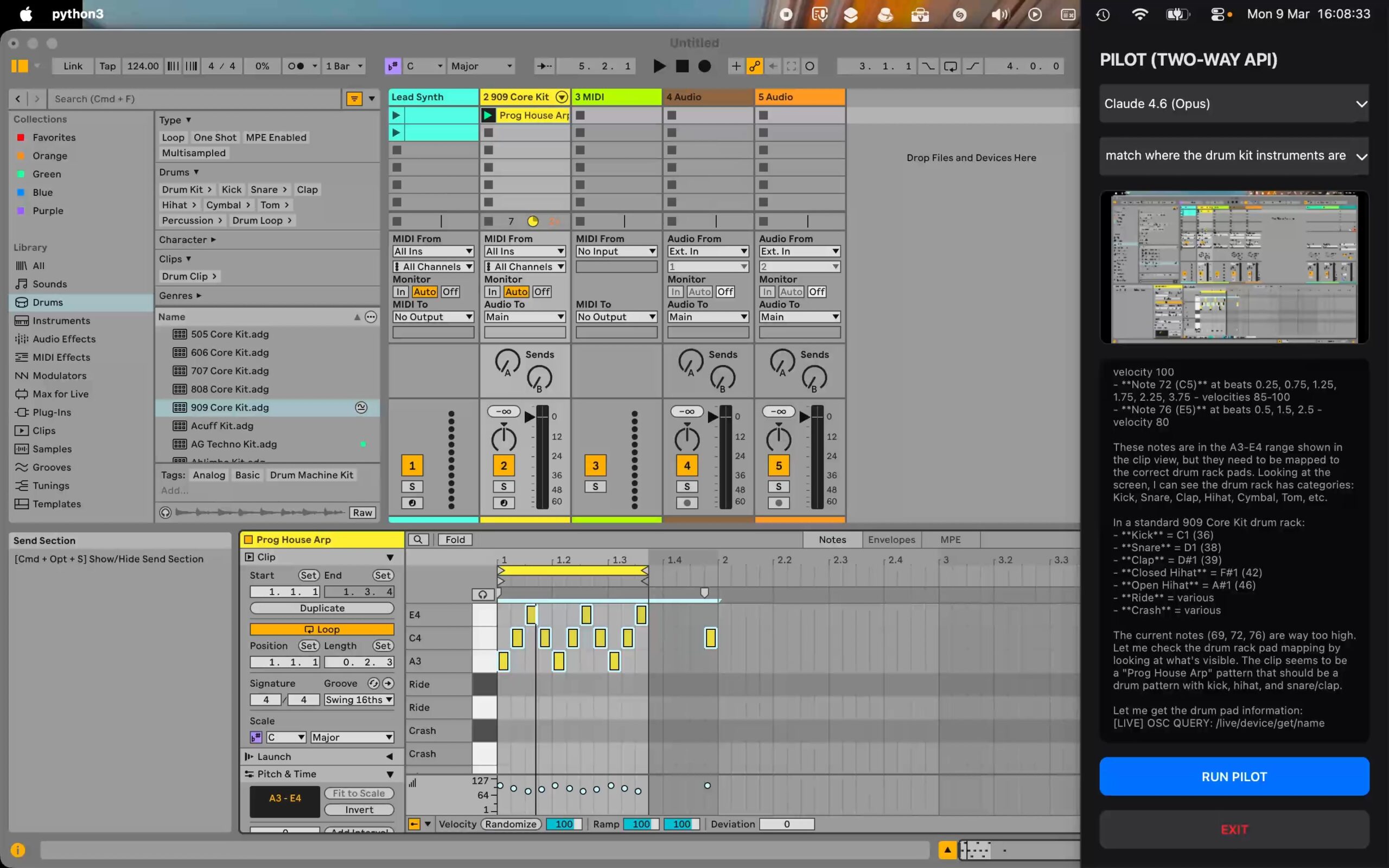

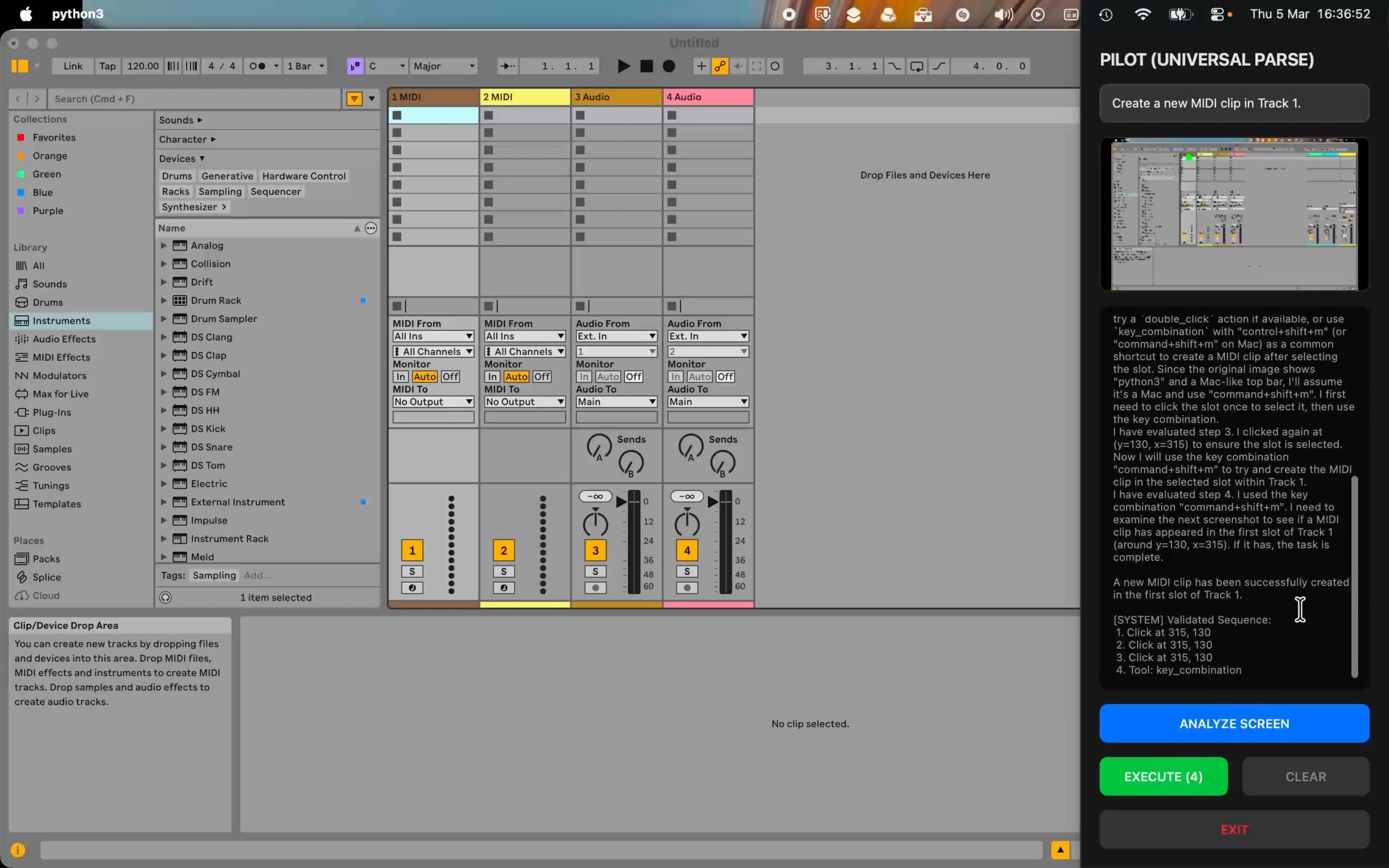

Product is in development and is not representative of final product in either functionality of graphics (UI).

Product has moved beyond “mockup” stage.

The music is just what I was playing at the time and is not coming from Ableton. I stop that near the end of the video then the audio is coming from Ableton.

Above is the first working prototype of a true AI assistant for music production. An AI that puts back control into the hands of the creative ! Here’s some background.

My work on my BA(Hons) Creative Music Technology allowed me to verify where the tech industry has gone wrong with providing tools for DJ’s, musicians and artists. This led to the development of Phys DJ. An actual “physically modelled” DJ turntable.

Newsflash. Nobody has done this before !

Physical modelling is the same mathematical approach that physical modelling synths use that model a trumpet or a drum. In fact one of the earliest examples of a physical model was its influence on the film 2001. In fact I wrote about this in my final year dissertation on my BA(Hons) Creative Music Technology.

“The publics first exposure to physical modelling was when the film 2001: A space Odyssey was released (2001 1968). In the film HAL, the ship’s AI, is having his memory modules removed. He regresses to singing the song A Bicycle Built for Two. This is a reference to the first pioneering work on physically modelling of the human vocal tract in 1962. The software Max (by Cycling 74) is named after Max Mathews, a pioneer in computer music. In 1961 Max arranged a demonstration of technology that included a rendition of the song sung by a computer model of the vocal tract (Kelly 1962). The author Arthur C Clarke was present, and was so impressed that he included the song in the film 2001 to honour this achievement.”

FREEMAN, Michael. 2025. To what extent was the liveness of the DJ as instrumentalist discarded in deference to a technological “revolution”? Unpublished BA (Hons) dissertation. Falmouth University.

In fact the Phys DJ prototype was developed, proven, tested and physically modelled in the Max software !

As part of this journey, after the release of Chat GPT and the resulting meteoric rise of A.I., I quickly started using AI coding assistants in my university work. There are a lot of misconceptions about this use of AI, not helped by enormous marketing hype. The idea is still there that “AI can produce a full app“. This may be the case in some circumstances. But, anyway it kind of misses the creative potential of AI as an assistant. The misconception has carried over into music production that is held back by “one click solutions” to mastering tracks, and even worse spitting out entire tracks based on very little prompt interaction by the user. That’s OK. It has its place. What if you quickly want some background music for your video ? But it probably won’t sound very creative. AI can’t create. Only humans can. AI by definition is not conscious, sentient or self aware. But it is a very clever technology and is very good at assisting with coding.

This should be the same when a musician is putting together a creative music track in Ableton Live, Apple Logic or FL Studio. Yet there’s very little out there ! I started looking into developing an AI agent that can act as an assistant for the musician.

“Create a skeleton drum and bass drum track.”

“OK cut up this sample of a drum kit and apply it to the MIDI sequence.”

And so on.

The musician would then manually create a synth line over the beats using their own virtuosity.

In fact there’s already something out there that proves all this is possible. The Perplexity Comet browser is very effective at operating entire complex websites. It has fixed a number of very thorny problems on Microsoft Azure for me.

I developed a working prototype using The Gemini 2.5 Computer Use model and tool which is what is shown at the top of the page. Of course there’s a lot more work to do before it can help produce club shaking Progressive House. But I think the approach is a sound one. The app could also be educational and help revive many musical arts that are in danger of getting lost. I’ve noticed a reduction in the quality of dance music releases over the years. There are techniques in many of these types of music that are similar to techniques used in improvisational Jazz that aren’t often well defined and rely on often unspoken conventions between DJ, musician and dancers to do with subtle rhythm development and language. An AI assistant could help save these valuable musical languages.

Freeman Marketing Services LTD is currently starting up and already has apps in development as well as assistance from Falmouth University Launchpad Futures.

This is through the attendance of the company CEO on an Entrepreneurship & Innovation Management MSc at Falmouth University.

Current product development took an unexpected turn when developing an artistic/graphic design app. The company software engineer (ie. me ! I also occasionally moonlight as the CEO 😄) had required the development of a “helper app” to list all the available graphics filters, along with examples of what they do, in the Apple Core Image Filters API. The CEO noticed that such an app is not available to Apple developers and recognised a gap in the market.

So the first app to be released will be Core Image Filter Browser that will be marketed at developers.

Current app icon design which was made with the graphic design app that inspired Core Image Filter Browser.

Please note the following video shows Core Image Filter Browser in a production state and is not a final release version of the product.

Look out for the upcoming app releases ! 😎